Overview

As for the very fact, we all know that Artificial Intelligence is becoming more and more incorporated into the aspects of our daily lives, from writing to driving, it was only natural that it started taking over the world of art, thus, the invention of synthetic media.

In this article, I will provide an overview, details, and glimpses of how AI generated Art. So let’s dive deep into it.

What is Synthetic Media?

Synthetic media is a terminology that encompasses the artificial creation or modification of media by “machines” – specifically programs that rely on artificial intelligence and machine learning. In other words, it’s media that is crafted by technology.

Synthetic media today includes AI-written music, text generation, imagery and video, voice synthesis, and more. The field is ever-expanding as synthetic media scientists aim to disrupt more and more parts of traditional media, making new things simpler to create.

Have you heard of Deepfakes? That’s a perfect example of how interesting yet intimidating this new wave can be.

Now let’s jump into the working and results of a powerful yet creative algorithm, Neural Style Transfer, which is mainly used for generating new styled Images using features of two or more images.

What is Neural Style Transfer?

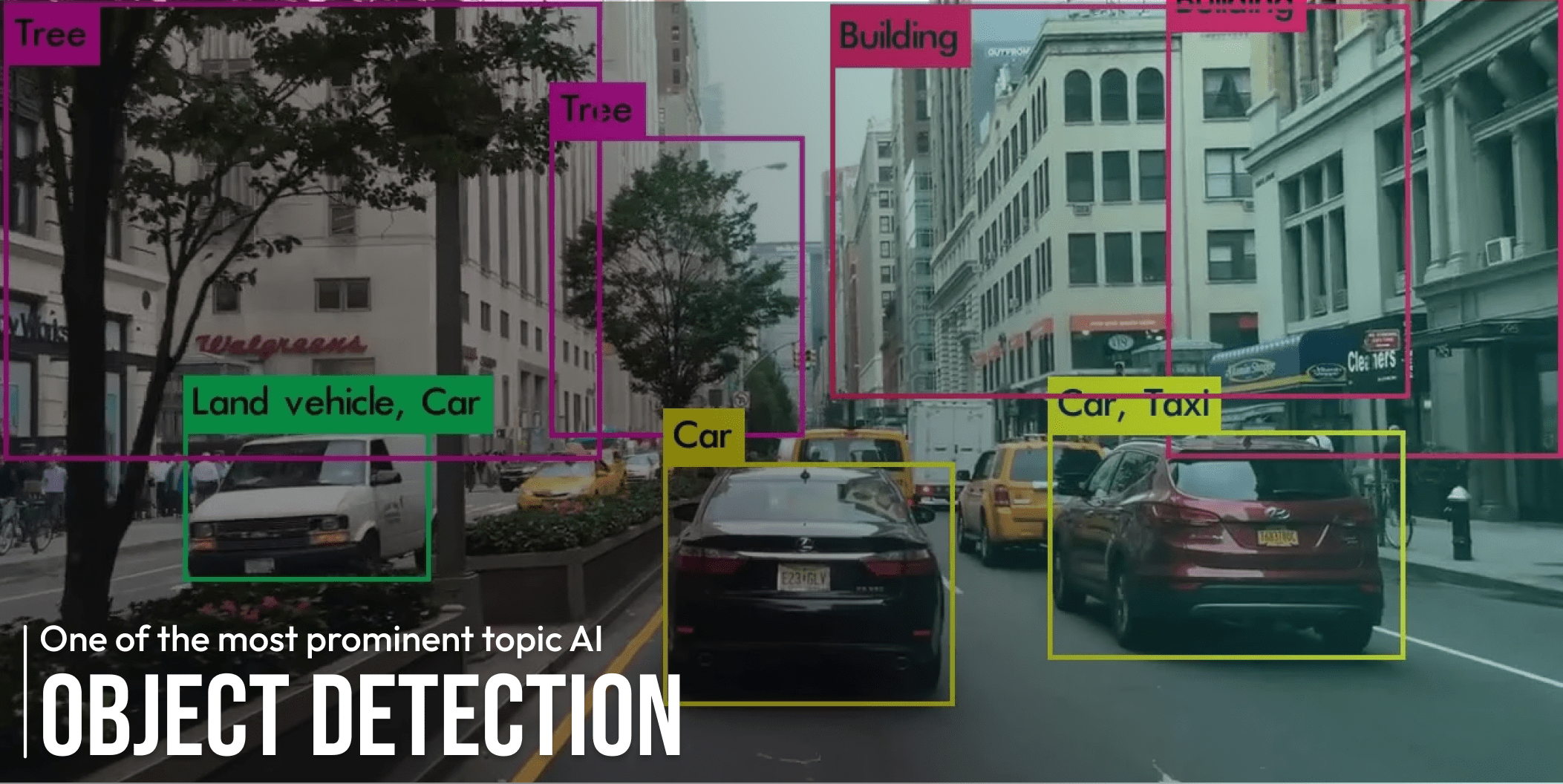

This phenomenon falls under the scope of Computer Vision in Artificial Intelligence which uses hard core Image Processing & Deep learning CNN model like VGG-19 for producing such results.

Giants like Google’s TensorFlow, Facebook’s Pytorch, DeepAI, and many more have built highly efficient pre-trained Convolutional Neural Network models for Style Transfer that generate very interesting and creative results.

How does Neural Style Transfer work?

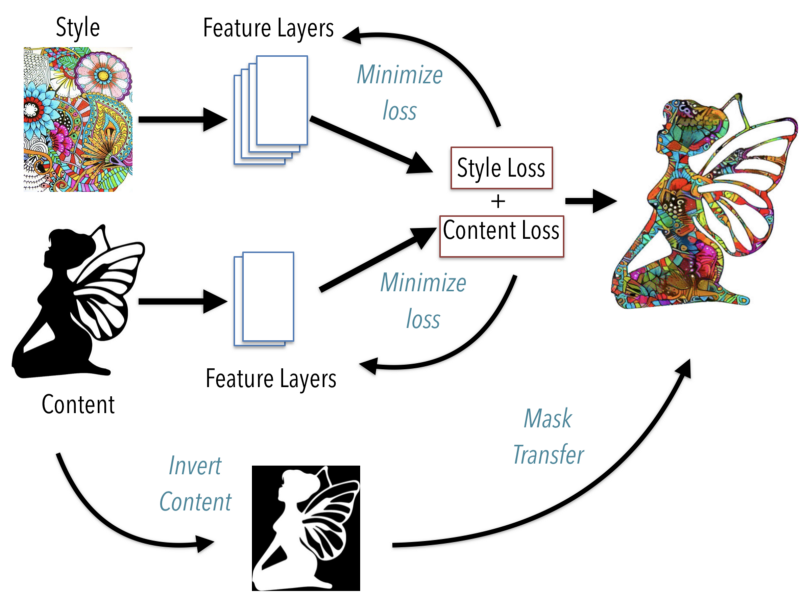

Neural Style Transfer is a method that enables us to generate an image with the same “content” as a base image, but with the “style” of our chosen picture.

NST uses a pre-trained Convolutional Neural Network with added loss functions to transfer style from one image to another and synthesize a newly generated image with the features we want to add.

Here are the inputs required by the model for image style transfer:

A Content Image – an image to that we want to transfer style to

A Style Image – the style we want to transfer to the content image

An Image (generated) – the final blend of content and style image

The images themselves make no sense to the model. These have to be converted into vectors and given to the model to turn them into a set of features, which is what Convolutional Neural Networks are responsible for.

Thus, somewhere amid the layers, where the image is given into the model, and the layer, which gives the output, the model works as a complex feature extractor. All we need from the model is its intermediate layers, and then make use of them to describe the content and style of the input images.

As in the above image, it is now pretty clear how the Style Transfer Algorithm works.

3 ways to implement neural style transfer

There are many complete and customized solutions as well as pre-trained models available for this algorithm and I’ll share some of them with you.

1-Tensorflow Neural Style Transfer with Pretrained model from TfHub

Load the essentials

import tensorflow as tf

import tensorflow_hub as hub

import matplotlib.pyplot as plt

import numpy as np

import cv2Read the content and style images

content_image = get_data('Bridge.jfif')

style_image = get_data('Style Image.jpg')Load pre-trained Tensorflow model from tfhub

model_link = "https://tfhub.dev/google/magenta/arbitrary-image-stylization-v1-256/2"

NST_model = hub.load(model_link)Get results

generated_image = NST_model(tf.constant(content_image), tf.constant(style_image))[0]2-Pytorch Neural Style Transfer with a module

Install the Dependencies

!nvidia-smi

!git clone https://github.com/crowsonkb/style-transfer-pytorch

!pip install -e ./style-transfer-pytorchLoad Images

!curl -OL 'https://raw.githubusercontent.com/jcjohnson/neural-style/master/examples/inputs/golden_gate.jpg'

!curl -OL 'https://raw.githubusercontent.com/jcjohnson/neural-style/master/examples/inputs/starry_night.jpg'Get Results

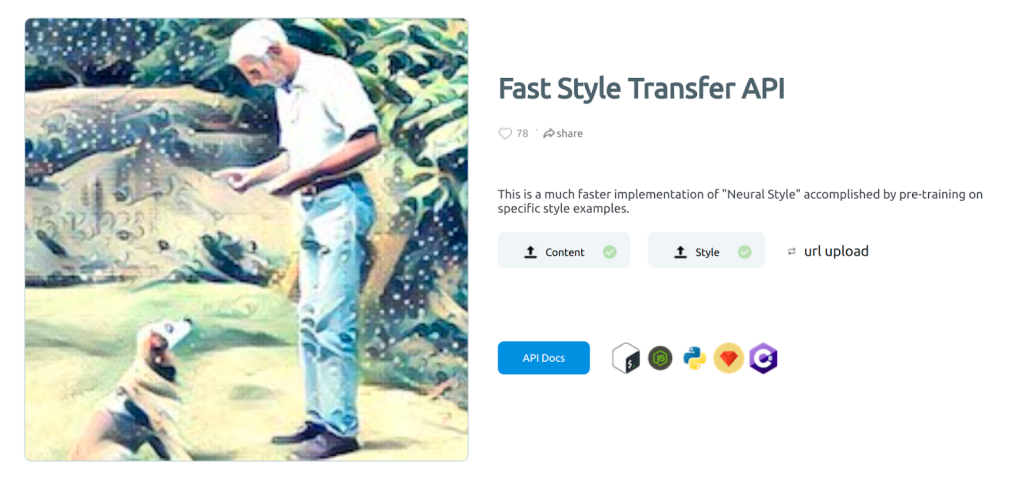

!style_transfer golden_gate.jpg starry_night.jpg -s 5123-Deep AI Neural Style Transfer Solution API

You can use their trial through the following webpage:

Or use their API

The results produced by it look like this

Finally, let’s look at some of the real-world applications of the Neural Style Transfer.

Applications of Neural Style Transfer

Photo and video editors

Style transfer is commonly used in video and photo editing software.

These AI and deep learning-based approaches and style transfer models can be applied easily to devices like mobile phones and provide users with a real-time ability to style images and videos.

Art and entertainment

Style transfer also has new techniques that can change the way we look and deal with art.

It makes over-priced and high-rated artistic works reproducible for office and home decor, or advertisements. Transfer models might also commercialize art.

Gaming and Virtual reality

Many video game streams are cloud-powered and use image-style transfer.

These models let the developers build interactive environments with customized artistic styles for the users. This gives a 3D touch to the game and brings out the artist inside every developer.

Final Words

In this article we have discussed brief introduction of what is neural style transfer, how does it works, applications and ways to implement neural style transfer.

Today, many Photo Editing Apps and Solutions are using Neural Style Transfer Algorithm, and as you have seen for yourself how this algorithm can produce such fascinating Art in no time which comes in handy in many fields of work saving days and days of efforts of artists. Also, it’s always fun to try out new stuff so, cheers!